Each year, Major League Baseball writers vote on which player will receive the Most Valuable Player (MVP) Award for the season. The voters in this case are members of the Baseball Writers’ Association of America (BBWA), which is a collection of writers and journalists from newspapers, magazines, and websites across the country. In the case of MVP voting, there are 30 members who vote. The alternatives being ranked are the players.

A scoring method is a method of voting that assigns points in a fixed scale to each alternative being ranked with higher-ranking alternatives receiving more points. Scoring methods are unique from standard pairwise majority votes because it does not only take into account pairwise winners. Rather, the ordering of alternatives corresponds to a pre-established point scale, such that an individual’s highest-ranked alternative receives the most points. In this sense, a scoring method allows voters to demonstrate both their relative and absolute preferences for certain alternatives (relative meaning alternative1 is preferred to alternative2, absolute meaning alternative1 is worth 10 points to the voter, and alternative2 is worth 5 points).

MVP voting uses the scoring method to assign a winner. From the BBWA’s official site (www.bbwaa.com/voting-faq), we find the specific scoring method:

| Rank | Points | |

|---|---|---|

| 1st | 14 | |

| 2nd | 9 | |

| 3rd | 8 | |

| 4th | 7 | |

| 5th | 6 | |

| 6th | 5 | |

| 7th | 4 | |

| 8th | 3 | |

| 9th | 2 | |

| 10th | 1 |

As evident above, there is a greater emphasis on being ranked first, as it has the highest point differential (5) than between any other adjacent rank (1). This is an important point, as it has implications on who will be selected the winner. There a couple potential problems that could arise in the MLB’s use of a scoring method for its MVP voting that I will examine.

Strategic voting can produce corrupt outcomes

Scoring methods are not immune to the concept of strategic voting. In MVP voting – or most ballots based on merit – you would generally expect the person receiving the majority of the first-place votes to be ranked highly on all ballots where he is not ranked first. It is unreasonable, for example, to expect a player who earned 28/30 first-place votes to be ranked 5th and 9th on the remaining two ballots if those two voters are unbiased. If two players are neck-and-neck in total points, then a malicious (or biased) voter may rank the player he does not want to win much farther down his preferences to assign him a lower point value when using a scoring method. This creates a greater differential between the person the voter wants to win and this second player. Let’s use the 2015 AL MVP Voting as an example, where Josh Donaldson was declared the winner.

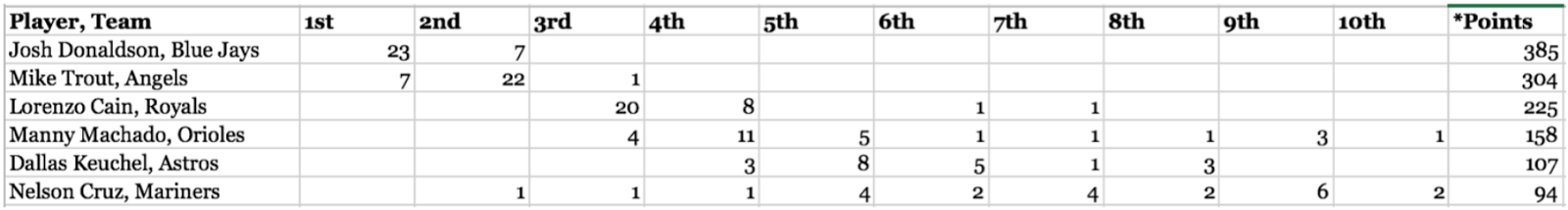

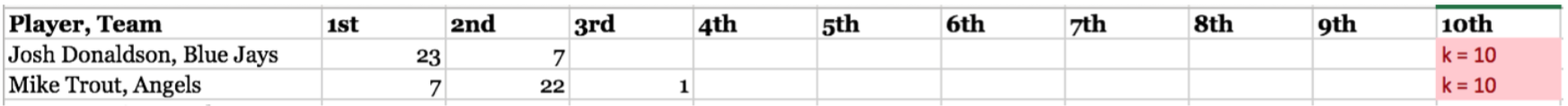

Using the standard 14-9-8-7-6-5-4-3-2-1 scoring method, Donaldson won the MVP voting with 385 points, which was 71 more points than the runner-up Mike Trout, who had 304 total points. 23 of the 30 voters ranked Donaldson as their first-place vote getter, earning him 14 points each. As you may expect from a merit-based vote where voters are unbiased (i.e., not strategically voting), the remaining 7 voters ranked Donaldson second, earning him 9 points each.

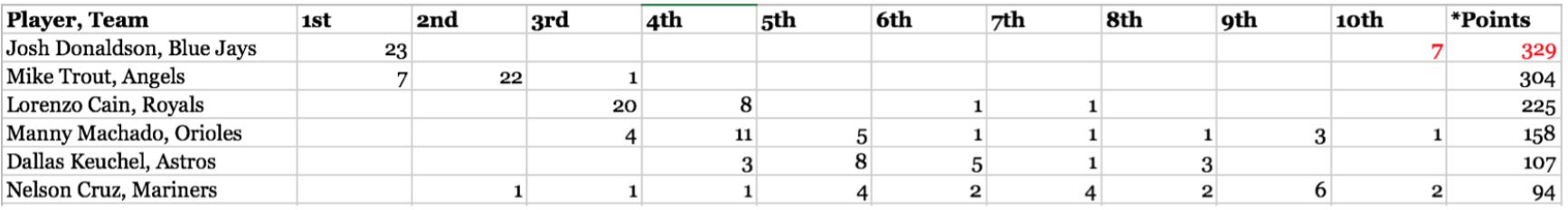

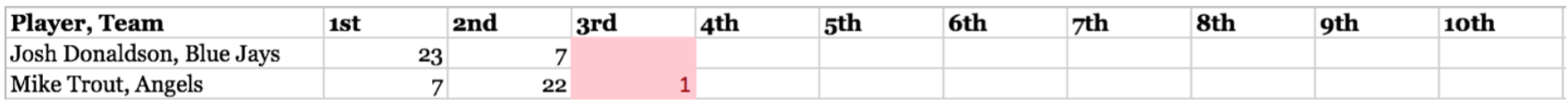

Now, let’s assume the remaining 7 writers – all of whom ranked Mike Trout as their first-place vote getter – had a strong incentive to see Mike Trout win. As Donaldson is Trout’s biggest competition, it would be in their best interest to rank Donaldson lower than second place on their ballots, so he would earn fewer points. If these 7 voters strategically placed Donaldson in 10th place, and arbitrarily replaced those second-place votes with players farther down the list with no chance of winning, we see a shift in the dynamic of the vote:

Strategic voting has turned a runaway winner (71-point differential) into a more tightly contested outcome (25-point differential). While in this example, the winner did not change (mainly due to Donaldson dominating 1st place votes), it is easy to imagine how strategic voting could change the outcome of a more closely contested race.

Different winners can be produced through different point assignments

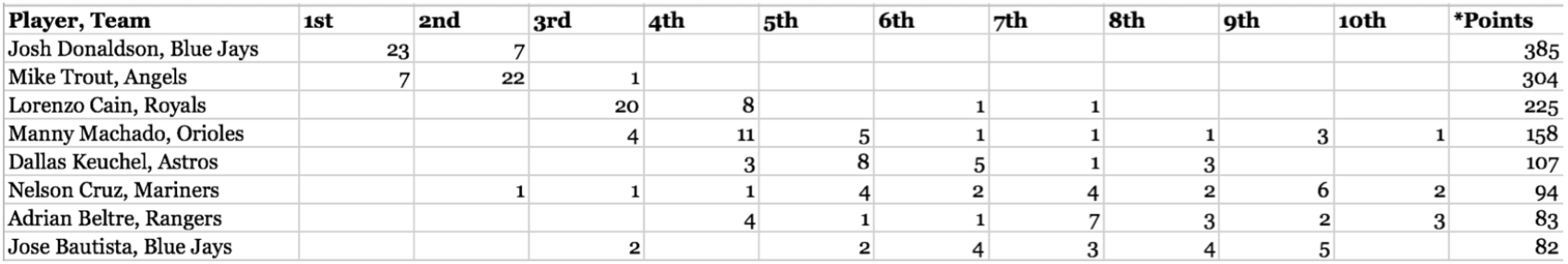

Another criticism of scoring methods is that changing the point values assigned to each ranking can produce a new winner. This occurs due to the underlying principle of using a scoring method: the player earning the most first-place votes (the majority winner) is not guaranteed to be ranked first collectively. Migrating to a different point scheme for MVP voting in the MLB – which one may argue is a completely arbitrary scoring method as is – may produce a different result. We can expand the same data set from the 2015 AL MVP voting to examine this theory. Using the MLB’s 14-9-8-7-6-5-4-3-2-1 scoring method, the top-8 MVP vote-getters were Donaldson, Trout, Cain, Machado, Keuchel, Cruz, Beltre, and Bautista in that order.

This outcome is predicated on the fact that the difference between a first-place and second-place vote is 5 times more valuable than the difference between any other adjacent votes. In other words, a first-place vote is as much a step up from a second-place vote as a second-place vote is from a seventh-place vote!

Let’s assume the BBWA adopts a different mindset; rather than the first-to-second-place differential being five times more valuable than any other, they create a more progressive drop-off in point values from the higher to lower ranks. The new point scheme is 14-11-9-7-6-5-4-3-2-1. The biggest differential still comes between first and second place (which is probably logical, as being ranked first is the highest honor). However, it is not as pronounced as it was before: it is now 3 points, where it used to be 5. Furthermore, earning a second-place vote now earns a 2-point differential over being ranked third, and earning a third-place vote also earns a two-point differential over being ranked fourth. The rest of the scheme is the same.

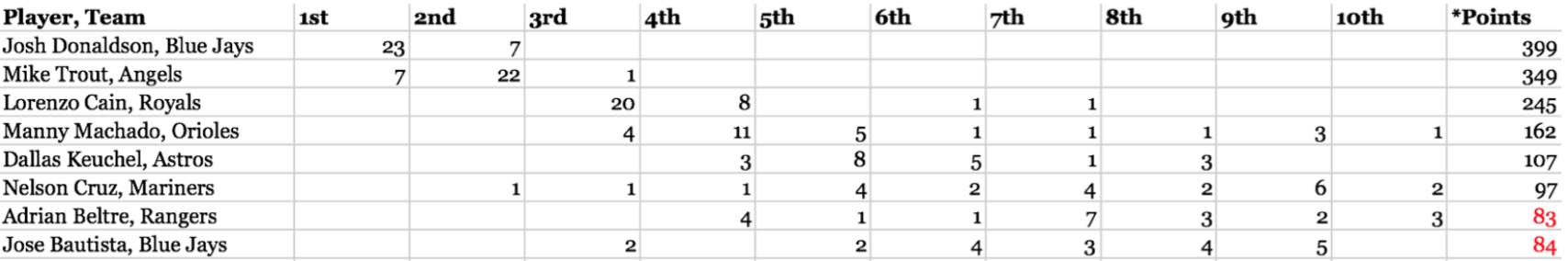

This new voting scheme is in many ways more practical than the original. While it still places the largest emphasis on being ranked first, it also emphasizes being ranked in the top three of votes, which is not uncommon in scoring methods (think of gold, silver, and bronze medals in sporting events). No emphasis is placed on earning a top-three vote in the BBWA’s original scheme, as the differentials are constant at 1 after the drop-off from first to second place. Examining our new point scheme, we get the following results:

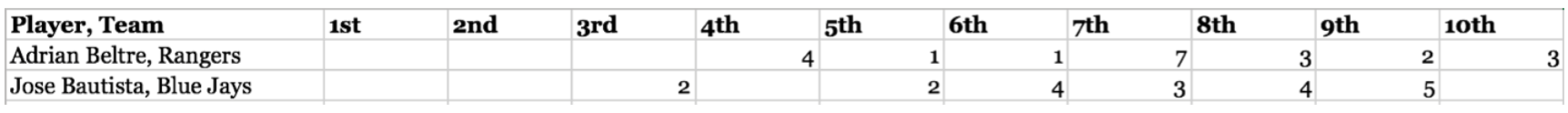

Most notably, Adrian Beltre and Jose Bautista would flip in total points from our modified scoring method (Beltre would drop to 8th, and Bautista would rise to 7th). Swaps like this have real implications on incentive clauses in players’ contracts, and can cost players millions of dollars. Beyond this swap, we see the differential between Donaldson and Trout drop from the original 71 points to 50 points. Thus, in a more contentious year – where the top point earner received a mix of first, second, and third-place votes – adapting the scoring method used could change the actual winner of the MVP award.

Jean-Pierre Benoit, an Economist from New York University, examines this point in greater detail. Since Benoit worked directly with the Baseball Hall of Fame association, he had access to ballots between the years of 1943-1989, whereas our data came from the most numerically contentious year in recent history where ballots are still available.

In Benoit’s data, 24 out of his 86 cases could have produced different MVP award winners based on a switch to another “reasonable” scoring method. Of these 26 instances, 19 of them corresponded to the second-place finisher becoming the new MVP, and 5 of them the third-place winner. Benoit presents an algorithm to determine for any ranking of alternatives if changing the scoring method used can produce a different outcome. He asserts that if we define a value rik as the number of voters who rank playeri at position k or higher, then we can definitively say that playeri can be ranked above playerj using some scoring method if and only if rik > rjk. Put in layman’s terms (and in the context of MVP ballots), if you start from a player’s 10th place votes, and progressively move up to his 9th, then 8th, then 7th, all the way to up to his 1st place votes, you must find some index (place) along the way where he has more total votes from that index to 1st place than another player from that same index to 1st place. We can examine this with an example that will demonstrate why no matter what scoring method was used, Josh Donaldson would have been named the AL MVP in 2015.

We start with the index k = 10. Both players have the same number of votes above index k (Donaldson has 23 + 7 = 30, and Trout has 7 + 22 + 1 = 30). Skipping a few arbitrary steps, we see that this will also be the case for k-values going all the way up to 3rd place, since neither player received any votes ranking them between 4th and 10th place. Once we get to 3rd place, though, something changes.

At a k-value of 3, Donaldson has more total votes above third place (23 + 7 = 30) than Trout (7 + 22 = 29). Immediately through our algorithm, we know that there exists a scoring method that would make Donaldson the MVP over Trout. We could also view this from the perspective of Trout. If we make k = 2, then we see Donaldson still has more votes above second place (23) than Trout (7). Thus, we have examined all possible k-values for Trout, and we never found an index at which he has more high-ranking votes than Donaldson. What this suggests is that there is no possible scoring method that would rank Trout above Donaldson.

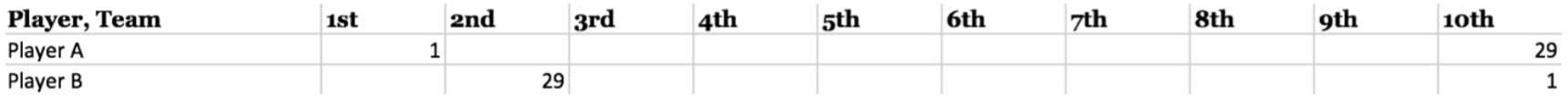

Logically, this makes perfect sense. A player would need to be more “top-heavy” in votes than another at a certain point for a scoring method to potentially rank him higher. If we consider player A with one first-place vote, and twenty-nine tenth-place votes, and player B with twenty-nine second-place votes, and one tenth-place vote, we can easily see how different scoring methods would produce different winners.

At any k-value between 10 and 3, player B has more total votes at higher ranks than player A. Thus, we know a scoring method exists that would make him the winner. At a k-value of 2, though, player A has more votes (1) at higher ranks than player B (0). Thus, we know that player A would definitively be a winner using some scoring method as well. This is a case where the scoring method determines the outcome of the vote. Using an absurd example to quickly demonstrate the idea, if first-place was worth 1,000 points, and 2nd-10th place was worth single- digit values each, player A would obviously win the award. On the contrary, if first-place was worth 10 points, and second-place was worth 9 points (and 10th was worth one point, for example), it is obvious that player B would be the MVP. The algorithm makes no claim about how logical the scoring method need be; rather, it identifies the necessary condition under which it is possible for an alternative to win (or swap above another alternative in collective ranking) given a certain set of ballots.

Briefly, we can see how this algorithm predicted Bautista and Beltre’s reversal from our earlier example:

If you recall, Beltre and Bautista flipped in rankings when we proposed an alternative (and reasonable) scoring method for MVP voting. Knowing our algorithm, we can see why: at a k- value of 10, Bautista has more higher-ranked votes than Beltre, and at a k-value of 8, Beltre has more higher-ranked votes than Bautista.

Concluding Thoughts

MVP voting in the MLB is as susceptible to ranking reversals as any other system using a scoring method. It is unclear why the 14-9-8-7-6-5-4-3-2-1 scheme was adopted as the de facto standard for MVP voting; while the drop-off of 5 points from 1st to 2nd place is not unreasonable by any means, our proposal to place a higher emphasis on a top-three finish is just as reasonable. Adopting a Borda Count – where the point discrepancies between adjacent rankings are constant at 1 – may address this issue, but in truth, how can a discrepancy of one point between first- and second-place truly convey the significance of being a voter’s top choice for the most valuable player award? Some discrepancy between adjacent rankings – especially towards the highest ranks – is probably needed, but finding the sweet-spot will always be a challenge and subject to scrutiny.

This post uses data and an algorithm from Jean-Pierre Benoit Scoring reversals: a major league dilemma. You can read his work here.